[ad_1]

The article here focusses on business criterion to use to better evaluate if a predictive model is ready for production and the associated risk when the predictions are wrong. A simple / practical framework is used to do this evaluation with three examples.

Today, businesses regularly use predictive analytics to optimize their business and achieve better business outcomes. There are countless examples of predictive analytics in marketing, manufacturing, real estate, software testing, healthcare, and many more. Predicting the future gives businesses a competitive advantage.

Predictive models use historical data to predict future trends. For example, Amazon and Netflix use predictive analytics to engage with their customers to offer better end to end user experience. Amazon uses customers’ purchase history to recommend products that may be of interest to them. Netflix uses past viewing history to recommend TV shows and movies.

Deploying a model means the results predicted by the model are ready for it to be consumed by the users in everyday decision making process. New data comes in and this data is passed on to the model and the model spits out predictions. This prediction is sent to the user via a dashboard or other user paradigms.

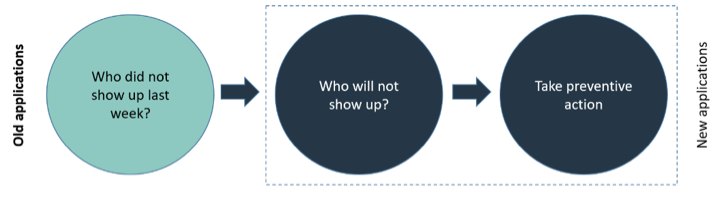

One interesting use case of predictive analytics for healthcare (or even the travel industry) is the ability to predict and reduce the number of missed appointments. Missed appointments cost the healthcare industry an average of 150 billion dollars annually. That factors out to around $200 for every hourly visit. A model can predict who will skip out of the appointment by making using of the past trends. In addition, the model can also suggest preventive action to take.

Before I talk about the practical way of evaluating a model risk, I want to mention few terms data scientists typically talk about for classification models. Classification models are predicting two classes (or more). Examples include:

- Will this customer churn?

- Will this patient need to be screened for cancer?

- Will this customer buy this product?

- Will it rain tomorrow?

The popular technical evaluation metrics used by the data scientist are Precision, Recall & F1 score. I will not explain those terms here. Here is a reference of interest.

When there is a discussion on accuracy, the typical question is, is the accuracy 80% or 90% or 95%? How do we know which one is good to deploy? The question is not whether its 70% or 80% or 90%.

Say we do 80% accuracy. This means model got 20% wrong. What is the cost or the impact of the incorrect predictions on the business. Is this manageable or is it severe? That is the most important question to answer.

Let’s take the example of customer churn predictive model. Let’s say the accuracy of 80%. This means 20% the model gets it wrong.

Business questions to ask to evaluate the predictive model

- What is the cost / pain / risk involved for the customers model incorrectly predicted as churn candidates even though they are not churn candidates? What is the implication of this incorrect prediction?

- What is the cost / pain / risk for the customers model incorrectly predicted as not churn candidates even though they are churn candidates? What is their appetite for this risk? What is the implication of this incorrect prediction?

What is the implication of this incorrect prediction?

These two questions are very important questions to answer to evaluate the risk involved in the wrong prediction. Sometimes one of them has a strong implication. Sometimes both will have a strong implication.

In the above case, if non churn candidates are marked as churn, they will probably get some additional love and promotions from the vendor. Though there may be some extra cost involved but ultimately this helps improve loyalty better.

For incorrectly predicting churn candidates as non-churn, this will impact the business as every customer who churns is an impact to the bottom line of the business. So its important to get this right so we do not loose any customers.

We will explore this topic with 2 more examples.

I will give an example in the cancer scenario where we are trying to predict if a customer needs to be screened or not (high or low risk) for a specific cancer.

Business questions to ask to evaluate the predictive model

- What is the cost / pain / risk involved for the patients who the model incorrectly deemed as high risk even though they are not? What is the implication of this incorrect prediction?

- What is the cost / pain / risk involved for the patients who the model incorrectly deemed as low risk even though they are high risk patients? What is their appetite for this risk? What is the implication of this incorrect prediction?

What is the implication of this incorrect prediction?

- The cost of incorrectly tagging a patient who is not a high risk as high risk is more of a nuisance (or a minor financial loss) than a big risk as when they come for checkup it will get cleared up.

- The cost for incorrectly tagging a patient as low risk even though they are a high risk patient is very high. Patients miss the opportunity to get diagnosed early and get treatment early to improve his/her chances of survival.

And hence the precisions / recall is chosen to better address the business problem and the risk of getting it wrong. In the above case, the optimization needs to make sure every patient who is at high risk needs to be identified at the highest accuracy possible.

Many customers (especially in life sciences) typically ask vendors to fill out a 200 to 300 line questionnaire for IT security compliance before they can share or data or deploy their software for use internally. Say you have a predictive model that predicts is a vendor is security compliant or not based on the long list questions the vendor answered.

Business questions to ask to evaluate the predictive model

- What is the cost / pain / risk for the vendor if the model incorrectly predicted as compliant even though they are non-compliant? What is their appetite for this risk? What is the implication of this incorrect prediction?

- What is the cost / pain / risk for the vendors the model incorrectly predicted as not compliant even though they are compliant? What is their appetite for this risk? What is the implication of this incorrect prediction?

What is the implication of this incorrect prediction?

- The cost of incorrectly tagging a vendor as non-compliant even though he is compliant depending on the situation could be more of a nuisance than a big risk as they can appeal to provide further documentation and this will get cleared up.

- The cost for incorrectly tagging a patient as compliant even though they are non compliant is very high. This has a significant business risk because you are providing sensitive data to the customer who is not compliant.

That is the business risk question to ponder about. Which one should we optimize for?

This is a generalized way to evaluate a model to understand the business risk when the model gets the prediction wrong. Say we are predicting 2 classes ClassA and ClassB (example high risk / low risk).

Business questions to ask to evaluate the predictive model

- What is the cost / pain / risk involved for the customers / patients who the model incorrectly predicted as ClassA even though they are ClassB? What is the implication of this incorrect prediction?

- What is the cost / pain / risk involved for the customers / patients who the model incorrectly predicted as ClassB even though they are ClassA? What is their appetite for this risk? What is the implication of this incorrect prediction?

If you successfully answer these questions, you would know the business risk of getting the prediction wrong and you can communicate this insight to the stakeholders. Remember its very important to share this information to all the stakeholders so they know how much to trust the current model so this model can be put in production for the practical usage.

Here are few examples of models you can practice using this framework.

- Does this machine need maintenance?

- Is this transaction a fraud?

- Will this patient show up for the appointment?

- Will this customer buy this product?

Use the following table and replace the text as you see fit to go through this analysis and refer to the examples above for further reference.

Predictive models are bringing new innovations to help companies achieve their outcomes. It uses the past trends to predict the future trend. Everyone wants 100% accuracy even though their current way of predicting would be a coin toss. But there is a threshold that exists for which a model can be put in to production by clearly understanding the impact wrong predictions are on the business.

The important part is truly understanding the implication to the business when the predictions are wrong. This needs to be clearly discussed and validated with the end customer for them to trust this model and use it every day for their decision making.

[ad_2]

Source link