[ad_1]

Inside one-way links on a internet site are a important organic position element for Google. Back links aid Google both of those explore web pages and assign rankings primarily based on amount and locale. A web site with 100 interior backlinks is presumably a greater priority than a person with a one url.

But neither reason — discovery and rankings — is probable if Googlebot cannot crawl the links. This can come about in 3 main strategies:

- Hyperlinks at the rear of JavaScript. Google can typically crawl and render back links in JavaScript, these as tabs and collapsible sections. But not often, specifically if the JavaScript necessitates execution to start with.

- Hyperlinks on a desktop edition but not on a cell. Google indexes a site’s cellular model by default. On the other hand, mobile web pages are frequently downsized desktop variations with much less links, preventing Google from identifying and indexing all those excluded webpages.

- Backlinks with a nofollow attribute or meta tag. Google statements it can stick to back links with nofollow characteristics, but there is no way to know if that transpired. And the meta tag blocks crawls only if Googlebot responds to it. Moreover, quite a few web page house owners are unaware of energetic nofollow attributes or meta tags, specifically if they use a plugin this kind of as Yoast, which adds those capabilities with a solitary click on.

Even if a site is indexed, you can in no way be sure the hyperlinks to or from that web site are crawlable and hence pass url equity.

Below are 3 approaches to guarantee Googlebot can crawl back links on your web page.

Resources to Inspect Back links

Google’s text cache. The text-only variation of Google Cache represents how Google sees a web site with CSS and JavaScript turned off. It is not how Google indexes a webpage, as it can now realize individuals webpages as people see them.

So a page’s textual content cache is a stripped-down edition. Still, it is the most reputable way to tell if Google can crawl your back links. If people links are in the text-only cache, Google can crawl them.

Over and above textual content-only, Google Cache is made up of the indexed model of a site. It is a helpful way of figuring out lacking components on the cell model.

Numerous look for optimizers overlook Google Cache. That’s a slip-up. All important ranking factors are there. There is no other way to make sure Google has that crucial information.

To access any page’s text-only variation of Google Cache, lookup Google for cache:[full-URL] and click on “Text-only edition.”

To obtain the textual content-only version of Google Cache, look for Google for cache:[full-URL] and simply click “Text-only version.” Simply click impression to enlarge.

—

Not all web pages will seem in Google Cache. If a page is absent, use “Inspect URL” in Look for Console or browser extensions for specifics on how Google renders it.

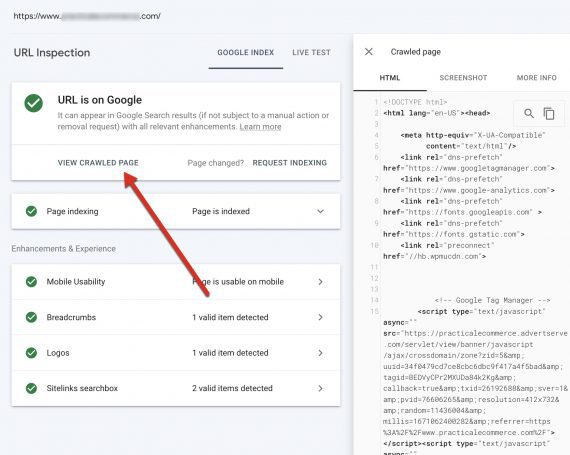

‘URL Inspection’ in Search Console demonstrates any site as Google understands it. Enter the URL and then simply click “View crawled site.”

From there, copy the HTML that Google makes use of to browse the site. Paste that HTML in a document this kind of as Google Docs and search (CTRL+F on Windows or CMD+F on Mac) for the linking URLs you are verifying. If the URLs are in the HTML code, Google can see them.

‘URL Inspection’ in Look for Console exhibits any page as Google understands it. Enter the URL and then simply click “View crawled page.” Click on impression to enlarge.

Browser extensions. The moment you ensure Google can see the links, make positive they are crawlable. Examining the code will detect the two the nofollow attribute and the meta tag. Firefox has a native resource to load a page’s HTML by using CTRL+U on Windows and CMD+U on Mac. Then search for “nofollow” in the code.

The NoFollow browser extension — readily available for Firefox and Chrome — highlights nofollow backlinks as a web site loads — in an attribute and a meta tag.

Not Definitive

None of these procedures definitively informs whether the links influence rankings. Google’s algorithm is very advanced and assigns meaning and pounds to inbound links as it chooses, such as disregarding them. Nonetheless, accessing and crawling one-way links are Googe’s initial step.

[ad_2]

Supply link